OpenAI Chat

Spring AI 支持 OpenAI 提供的 AI 语言模型 ChatGPT。ChatGPT 已成为激发人们对 AI 驱动的文本生成兴趣的重要因素,这要归功于它创建的行业领先的文本生成模型和嵌入式模型。

Spring AI supports ChatGPT, the AI language model by OpenAI. ChatGPT has been instrumental in sparking interest in AI-driven text generation, thanks to its creation of industry-leading text generation models and embeddings.

Prerequisites

你需要使用 OpenAI 创建一个 API 才能访问 ChatGPT 模型。在 OpenAI signup page 创建一个帐户并在 API Keys page 上生成令牌。Spring AI 项目定义了一个名为 spring.ai.openai.api-key 的配置属性,你应将其设置为从 openai.com 获得的 API Key 的值。导出环境变量是设置该配置属性的一种方式:

You will need to create an API with OpenAI to access ChatGPT models.

Create an account at OpenAI signup page and generate the token on the API Keys page.

The Spring AI project defines a configuration property named spring.ai.openai.api-key that you should set to the value of the API Key obtained from openai.com.

Exporting an environment variable is one way to set that configuration property:

export SPRING_AI_OPENAI_API_KEY=<INSERT KEY HERE>Add Repositories and BOM

Spring AI 工件发布在 Spring 里程碑和快照存储库中。请参考 Repositories 部分将这些存储库添加到你的构建系统。

Spring AI artifacts are published in Spring Milestone and Snapshot repositories. Refer to the Repositories section to add these repositories to your build system.

为了帮助进行依赖项管理,Spring AI 提供了一个 BOM(物料清单)以确保在整个项目中使用一致版本的 Spring AI。有关将 Spring AI BOM 添加到你的构建系统的说明,请参阅 Dependency Management 部分。

To help with dependency management, Spring AI provides a BOM (bill of materials) to ensure that a consistent version of Spring AI is used throughout the entire project. Refer to the Dependency Management section to add the Spring AI BOM to your build system.

Auto-configuration

Spring AI 为 OpenAI 聊天客户端提供了 Spring Boot 自动配置。要启用它,请将以下依赖项添加到项目的 Maven pom.xml 文件中:

Spring AI provides Spring Boot auto-configuration for the OpenAI Chat Client.

To enable it add the following dependency to your project’s Maven pom.xml file:

<dependency>

<groupId>org.springframework.ai</groupId>

<artifactId>spring-ai-openai-spring-boot-starter</artifactId>

</dependency>或添加到 Gradle build.gradle 构建文件中。

or to your Gradle build.gradle build file.

dependencies {

implementation 'org.springframework.ai:spring-ai-openai-spring-boot-starter'

}

|

|

Refer to the Dependency Management section to add the Spring AI BOM to your build file. |

Chat Properties

Retry Properties

前缀 spring.ai.retry 用作属性前缀,让您可以配置 OpenAI 聊天客户端的重试机制。

The prefix spring.ai.retry is used as the property prefix that lets you configure the retry mechanism for the OpenAI Chat client.

| Property | Description | Default |

|---|---|---|

spring.ai.retry.max-attempts |

Maximum number of retry attempts. |

10 |

spring.ai.retry.backoff.initial-interval |

Initial sleep duration for the exponential backoff policy. |

2 sec. |

spring.ai.retry.backoff.multiplier |

Backoff interval multiplier. |

5 |

spring.ai.retry.backoff.max-interval |

Maximum backoff duration. |

3 min. |

spring.ai.retry.on-client-errors |

If false, throw a NonTransientAiException, and do not attempt retry for |

false |

spring.ai.retry.exclude-on-http-codes |

List of HTTP status codes that should not trigger a retry (e.g. to throw NonTransientAiException). |

empty |

Connection Properties

spring.ai.openai 前缀用作可让你连接到 Open AI 的属性前缀。

The prefix spring.ai.openai is used as the property prefix that lets you connect to OpenAI.

| Property | Description | Default |

|---|---|---|

spring.ai.openai.base-url |

The URL to connect to |

[role="bare"]https://api.openai.com |

spring.ai.openai.api-key |

The API Key |

- |

Configuration Properties

前缀 spring.ai.openai.chat 是一个属性前缀,它让您可以配置 OpenAI 的聊天客户端实现。

The prefix spring.ai.openai.chat is the property prefix that lets you configure the chat client implementation for OpenAI.

| Property | Description | Default |

|---|---|---|

spring.ai.openai.chat.enabled |

Enable OpenAI chat client. |

true |

spring.ai.openai.chat.base-url |

Optional overrides the spring.ai.openai.base-url to provide chat specific url |

- |

spring.ai.openai.chat.api-key |

Optional overrides the spring.ai.openai.api-key to provide chat specific api-key |

- |

spring.ai.openai.chat.options.model |

This is the OpenAI Chat model to use |

|

spring.ai.openai.chat.options.temperature |

The sampling temperature to use that controls the apparent creativity of generated completions. Higher values will make output more random while lower values will make results more focused and deterministic. It is not recommended to modify temperature and top_p for the same completions request as the interaction of these two settings is difficult to predict. |

0.8 |

spring.ai.openai.chat.options.frequencyPenalty |

Number between -2.0 and 2.0. Positive values penalize new tokens based on their existing frequency in the text so far, decreasing the model’s likelihood to repeat the same line verbatim. |

0.0f |

spring.ai.openai.chat.options.logitBias |

Modify the likelihood of specified tokens appearing in the completion. |

- |

spring.ai.openai.chat.options.maxTokens |

The maximum number of tokens to generate in the chat completion. The total length of input tokens and generated tokens is limited by the model’s context length. |

- |

spring.ai.openai.chat.options.n |

How many chat completion choices to generate for each input message. Note that you will be charged based on the number of generated tokens across all of the choices. Keep n as 1 to minimize costs. |

1 |

spring.ai.openai.chat.options.presencePenalty |

Number between -2.0 and 2.0. Positive values penalize new tokens based on whether they appear in the text so far, increasing the model’s likelihood to talk about new topics. |

- |

spring.ai.openai.chat.options.responseFormat |

An object specifying the format that the model must output. Setting to |

- |

spring.ai.openai.chat.options.seed |

This feature is in Beta. If specified, our system will make a best effort to sample deterministically, such that repeated requests with the same seed and parameters should return the same result. |

- |

spring.ai.openai.chat.options.stop |

Up to 4 sequences where the API will stop generating further tokens. |

- |

spring.ai.openai.chat.options.topP |

An alternative to sampling with temperature, called nucleus sampling, where the model considers the results of the tokens with top_p probability mass. So 0.1 means only the tokens comprising the top 10% probability mass are considered. We generally recommend altering this or temperature but not both. |

- |

spring.ai.openai.chat.options.tools |

A list of tools the model may call. Currently, only functions are supported as a tool. Use this to provide a list of functions the model may generate JSON inputs for. |

- |

spring.ai.openai.chat.options.toolChoice |

Controls which (if any) function is called by the model. none means the model will not call a function and instead generates a message. auto means the model can pick between generating a message or calling a function. Specifying a particular function via {"type: "function", "function": {"name": "my_function"}} forces the model to call that function. none is the default when no functions are present. auto is the default if functions are present. |

- |

spring.ai.openai.chat.options.user |

A unique identifier representing your end-user, which can help OpenAI to monitor and detect abuse. |

- |

spring.ai.openai.chat.options.functions |

List of functions, identified by their names, to enable for function calling in a single prompt requests. Functions with those names must exist in the functionCallbacks registry. |

- |

|

您可以覆盖 |

|

You can override the common |

|

以 |

|

All properties prefixed with |

Chat Options

OpenAiChatOptions.java 提供模型配置,如要使用的模型、温度、频率惩罚等。

The OpenAiChatOptions.java provides model configurations, such as the model to use, the temperature, the frequency penalty, etc.

在启动时,默认选项可以使用 OpenAiChatClient(api, options) 构造函数或 spring.ai.openai.chat.options.* 属性进行配置。

On start-up, the default options can be configured with the OpenAiChatClient(api, options) constructor or the spring.ai.openai.chat.options.* properties.

在运行时,可以通过向 Prompt 调用中添加新的请求特定选项来覆盖默认选项。例如,覆盖特定请求的默认模型和温度:

At run-time you can override the default options by adding new, request specific, options to the Prompt call.

For example to override the default model and temperature for a specific request:

ChatResponse response = chatClient.call(

new Prompt(

"Generate the names of 5 famous pirates.",

OpenAiChatOptions.builder()

.withModel("gpt-4-32k")

.withTemperature(0.4)

.build()

));

|

|

In addition to the model specific OpenAiChatOptions you can use a portable ChatOptions instance, created with the ChatOptionsBuilder#builder(). |

Function Calling

你可以使用 OpenAiChatClient 注册自定义 Java 函数,并让 OpenAI 模型智能地选择输出一个 JSON 对象,该对象包含用于调用一个或多个已注册函数的参数。这是一种将 LLM 功能与外部工具和 API 结合起来的强大技术。阅读更多有关 OpenAI Function Calling 的信息。

You can register custom Java functions with the OpenAiChatClient and have the OpenAI model intelligently choose to output a JSON object containing arguments to call one or many of the registered functions. This is a powerful technique to connect the LLM capabilities with external tools and APIs. Read more about OpenAI Function Calling.

Sample Controller (Auto-configuration)

Create 一个新的 Spring Boot 项目,并将 spring-ai-openai-spring-boot-starter 添加到您的 pom(或 gradle)依赖项中。

Create a new Spring Boot project and add the spring-ai-openai-spring-boot-starter to your pom (or gradle) dependencies.

在 src/main/resources 目录下添加一个 application.properties 文件,以启用和配置 OpenAI Chat 客户端:

Add a application.properties file, under the src/main/resources directory, to enable and configure the OpenAi Chat client:

spring.ai.openai.api-key=YOUR_API_KEY

spring.ai.openai.chat.options.model=gpt-3.5-turbo

spring.ai.openai.chat.options.temperature=0.7|

使用您的 OpenAI 凭据替换 |

|

replace the |

这将创建一个你可以注入到你的类中的 OpenAiChatClient 实现。下面是一个使用聊天客户端进行文本生成的简单 @Controller 类的示例。

This will create a OpenAiChatClient implementation that you can inject into your class.

Here is an example of a simple @Controller class that uses the chat client for text generations.

@RestController

public class ChatController {

private final OpenAiChatClient chatClient;

@Autowired

public ChatController(OpenAiChatClient chatClient) {

this.chatClient = chatClient;

}

@GetMapping("/ai/generate")

public Map generate(@RequestParam(value = "message", defaultValue = "Tell me a joke") String message) {

return Map.of("generation", chatClient.call(message));

}

@GetMapping("/ai/generateStream")

public Flux<ChatResponse> generateStream(@RequestParam(value = "message", defaultValue = "Tell me a joke") String message) {

Prompt prompt = new Prompt(new UserMessage(message));

return chatClient.stream(prompt);

}

}Manual Configuration

OpenAiChatClient实现 ChatClient`和 `StreamingChatClient,并使用 Low-level OpenAiApi Client连接到 OpenAI 服务。

The OpenAiChatClient implements the ChatClient and StreamingChatClient and uses the Low-level OpenAiApi Client to connect to the OpenAI service.

添加 spring-ai-openai 依赖到你的项目的 Maven pom.xml 文件中:

Add the spring-ai-openai dependency to your project’s Maven pom.xml file:

<dependency>

<groupId>org.springframework.ai</groupId>

<artifactId>spring-ai-openai</artifactId>

</dependency>或添加到 Gradle build.gradle 构建文件中。

or to your Gradle build.gradle build file.

dependencies {

implementation 'org.springframework.ai:spring-ai-openai'

}

|

|

Refer to the Dependency Management section to add the Spring AI BOM to your build file. |

接下来,创建一个 OpenAiChatClient,并用它进行文本生成:

Next, create a OpenAiChatClient and use it for text generations:

var openAiApi = new OpenAiApi(System.getenv("OPENAI_API_KEY"));

var chatClient = new OpenAiChatClient(openAiApi)

.withDefaultOptions(OpenAiChatOptions.builder()

.withModel("gpt-35-turbo")

.withTemperature(0.4)

.withMaxTokens(200)

.build());

ChatResponse response = chatClient.call(

new Prompt("Generate the names of 5 famous pirates."));

// Or with streaming responses

Flux<ChatResponse> response = chatClient.stream(

new Prompt("Generate the names of 5 famous pirates."));OpenAiChatOptions 为聊天请求提供配置信息。OpenAiChatOptions.Builder 是流利的选项构建器。

The OpenAiChatOptions provides the configuration information for the chat requests.

The OpenAiChatOptions.Builder is fluent options builder.

Low-level OpenAiApi Client

OpenAiApi 提供适用于 OpenAI Chat API 的轻量级 Java 客户端 OpenAI 聊天 API。

The OpenAiApi provides is lightweight Java client for OpenAI Chat API OpenAI Chat API.

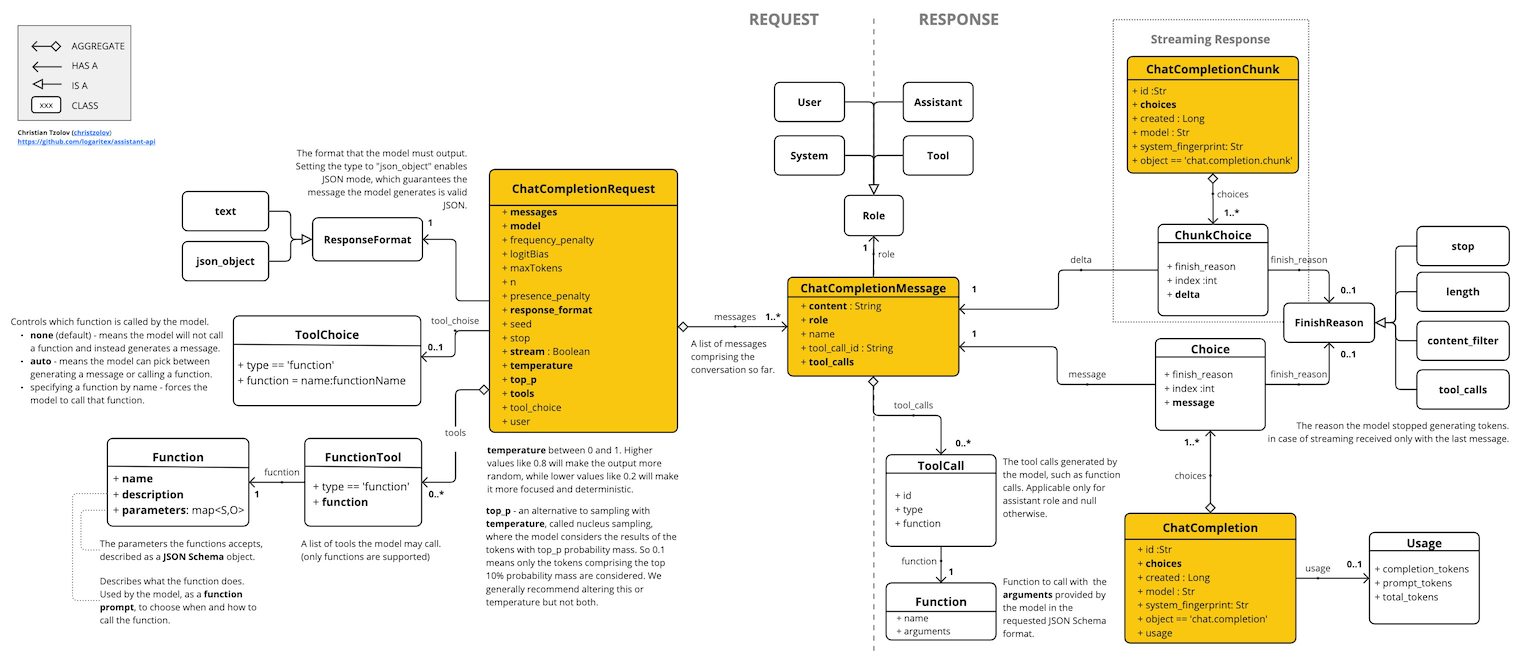

下面的类图说明了 OpenAiApi 聊天接口和构建块:

Following class diagram illustrates the OpenAiApi chat interfaces and building blocks:

下面是一个简单的片段,说明如何以编程方式使用 API:

Here is a simple snippet how to use the api programmatically:

OpenAiApi openAiApi =

new OpenAiApi(System.getenv("OPENAI_API_KEY"));

ChatCompletionMessage chatCompletionMessage =

new ChatCompletionMessage("Hello world", Role.USER);

// Sync request

ResponseEntity<ChatCompletion> response = openAiApi.chatCompletionEntity(

new ChatCompletionRequest(List.of(chatCompletionMessage), "gpt-3.5-turbo", 0.8f, false));

// Streaming request

Flux<ChatCompletionChunk> streamResponse = openAiApi.chatCompletionStream(

new ChatCompletionRequest(List.of(chatCompletionMessage), "gpt-3.5-turbo", 0.8f, true));请遵循 OpenAiApi.java 的 JavaDoc 了解更多信息。

Follow the OpenAiApi.java's JavaDoc for further information.

Example Code

-

The OpenAiApiIT.java test provides some general examples how to use the lightweight library.

-

The OpenAiApiToolFunctionCallIT.java test shows how to use the low-level API to call tool functions. Based on the OpenAI Function Calling tutorial.